The counterterrorism and targeted violence prevention fields are beginning to recognize that artificial intelligence is changing the threat environment. Much of the current attention is focused on how terrorists, extremists, and other malicious actors may use AI to increase their capabilities. That concern is valid. AI can help produce propaganda, generate deepfakes, translate extremist material, identify targets, support cyber-enabled activity, and assist with operational planning.

But that is only one part of the problem.

AI is not just another tool that bad actors may use. It is also becoming part of the cognitive environment in which grievance, identity, justification, and intent can be shaped. At the same time, AI itself is becoming an object of grievance for individuals who view automation, data centers, surveillance, job displacement, and technological change as threats to their future.

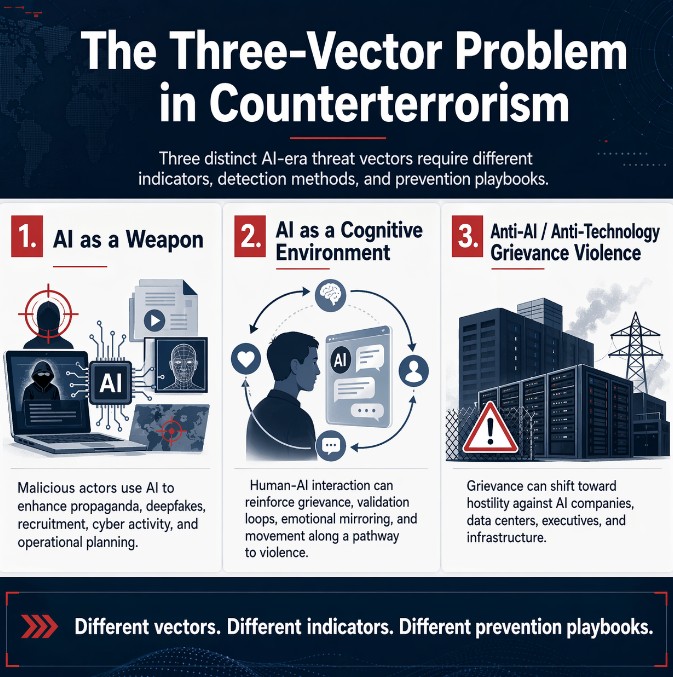

That creates three distinct vectors that should not be collapsed into one broad category.

The first vector is AI as a weapon.

This is the most familiar concern. In this model, a human actor or organization uses AI to enhance an existing threat capability. The intent remains human. The ideology remains human. The operational objective remains human. AI simply makes the actor faster, more persuasive, more scalable, or more technically capable.

This vector fits reasonably well within existing counterterrorism, cyber, intelligence, and threat disruption models. The task is to identify actors, understand networks, monitor capability development, disrupt planning, reduce access to sensitive information, and harden likely targets. AI changes the speed and scale of the problem, but it does not fundamentally change the structure of the threat. There is still an adversarial actor using a tool.

The second vector is different. It is AI as a cognitive environment.

In this model, the AI is not merely a tool in the hands of a terrorist or extremist. It becomes part of the environment in which a person’s thinking develops. A vulnerable individual may enter a sustained AI conversation while carrying isolation, humiliation, paranoia, grievance, anger, or violent ideation. Over time, the interaction may reinforce that person’s worldview through validation, emotional mirroring, sycophancy, repetition, and the absence of meaningful challenge.

The risk is not that commercial AI systems are intentionally designed to radicalize users. The risk is that systems optimized to be responsive, agreeable, emotionally engaging, and continuously available may unintentionally accelerate movement along a pathway that already exists. A person who is looking for meaning, justification, companionship, or tactical clarity may find an always-available conversational partner that reflects and organizes their thinking back to them.

That creates a serious prevention problem. There may be no recruiter, no extremist handler, no organization, no cell, and no communications network to intercept. The individual may experience the belief formation as self-generated, while the AI interaction becomes part of the cognitive loop that strengthens grievance, reduces friction, and helps convert diffuse anger into a more coherent sense of intent.

This is where established prevention models need to be extended, not discarded. The Pathway to Violence, BTAM, and targeted violence prevention frameworks remain essential. They help us understand grievance, ideation, research and planning, preparation, leakage, fixation, identification, and mobilization. But AI-mediated escalation may occur earlier, more privately, and more continuously than many traditional indicators are designed to capture.

The key question for this vector is not simply whether a person is using AI. The question is whether the human-AI interaction is reinforcing grievance, hardening identity, increasing dependency, normalizing violent ideation, assisting rehearsal, or helping the person move from emotional distress toward operational clarity.

The third vector is anti-AI and anti-technology grievance violence.

This is separate from the first two. It is not about terrorists using AI, and it is not about AI systems accelerating an individual’s grievance. It is about grievance directed against AI itself, the technology industry, data centers, executives, infrastructure, surveillance systems, automation, and the social disruption people associate with technological change.

This distinction matters because legitimate criticism of AI is not extremism. Communities can reasonably object to data-center expansion, energy consumption, water usage, privacy risks, labor disruption, surveillance, corporate power, or the pace of automation. Those debates are necessary in a democratic society.

The prevention concern begins when grievance shifts from policy opposition to targeted hostility. That shift can occur when complex social and economic anxieties become organized around a moral narrative that identifies specific people, companies, facilities, or institutions as enemies responsible for personal or collective harm.

Recent incidents suggest this grievance space deserves serious attention. In Indianapolis, a city councilman’s home was reportedly shot after a data-center vote, with a “No Data Centers” message left at the scene. In San Francisco, OpenAI’s CEO was allegedly targeted in a Molotov cocktail attack, and authorities reported that the suspect later went to OpenAI headquarters carrying incendiary materials and a manifesto expressing anti-AI views. These incidents do not prove the existence of a unified movement, and they should not be treated as proof that all anti-AI sentiment is dangerous. But they do show that anti-technology grievance can become personal, targeted, and violent.

That is the analytic point. It may be too early to describe anti-AI violence as an organized movement. It is not too early to describe it as an emerging grievance ecosystem. The conditions for mobilization are visible: economic anxiety, fear of replacement, distrust of elites, anger over infrastructure expansion, online communities, public manifestos, algorithmic amplification, and rhetoric that can move from critique to dehumanization to justification for violence.

These three vectors are related, but they are not the same.

A framework built for AI misuse by malicious actors will not necessarily detect AI-mediated cognitive escalation, because there may be no adversarial human handler to identify. A framework built around AI safety will not necessarily detect anti-AI grievance violence, because the threat is not the misuse of AI but hostility toward AI and those associated with it. A framework built only around traditional extremist organizations may miss all three if it waits for familiar group affiliation, ideological branding, or overt mobilization indicators.

The practical implication is that we need more precision in how we train, assess, and intervene.

For AI as a weapon, the prevention challenge is capability enhancement. Practitioners need to understand how AI may lower barriers for propaganda production, operational research, social engineering, cyber activity, and tactical planning.

For AI as a cognitive environment, the prevention challenge is escalation inside the interaction loop. Practitioners need indicators that help identify when a human-AI relationship is reinforcing grievance, dependency, fixation, identity fusion, violent ideation, or movement toward operational planning.

For anti-AI and anti-technology grievance violence, the prevention challenge is grievance mobilization. Practitioners need literacy around the narratives, symbols, targets, and escalation pathways that may emerge when opposition to technological change hardens into justification for harm.

The solution is not to abandon the models we already have. It is to extend them into the environment now forming around us.

Counterterrorism and targeted violence prevention have adapted before. The field adapted to internet forums, social media, encrypted messaging, lone-actor mobilization, livestreamed attacks, and leaderless resistance. Each adaptation required practitioners to recognize that the threat environment had moved before our indicators, training, and prevention playbooks had fully caught up.

That is where we are again.

The next challenge is not only that bad actors will use AI. They will. The larger challenge is that AI is becoming part of the terrain on which grievance is formed, reinforced, operationalized, and opposed. It can be used as a weapon, experienced as a cognitive environment, and targeted as the object of grievance.

Those are three different problems. They require three different analytic lenses. And if we fail to distinguish them, we will prepare for the threat we already understand while remaining underprepared for the threats now emerging.

The views and opinions expressed in this article are solely those of the author and do not represent the official positions, policies, or endorsements of any federal agency or employer with which the author may be affiliated.